Signing a contract you haven’t pressure-tested is like driving at night with your headlights off. You might be fine for a while, until you aren’t.

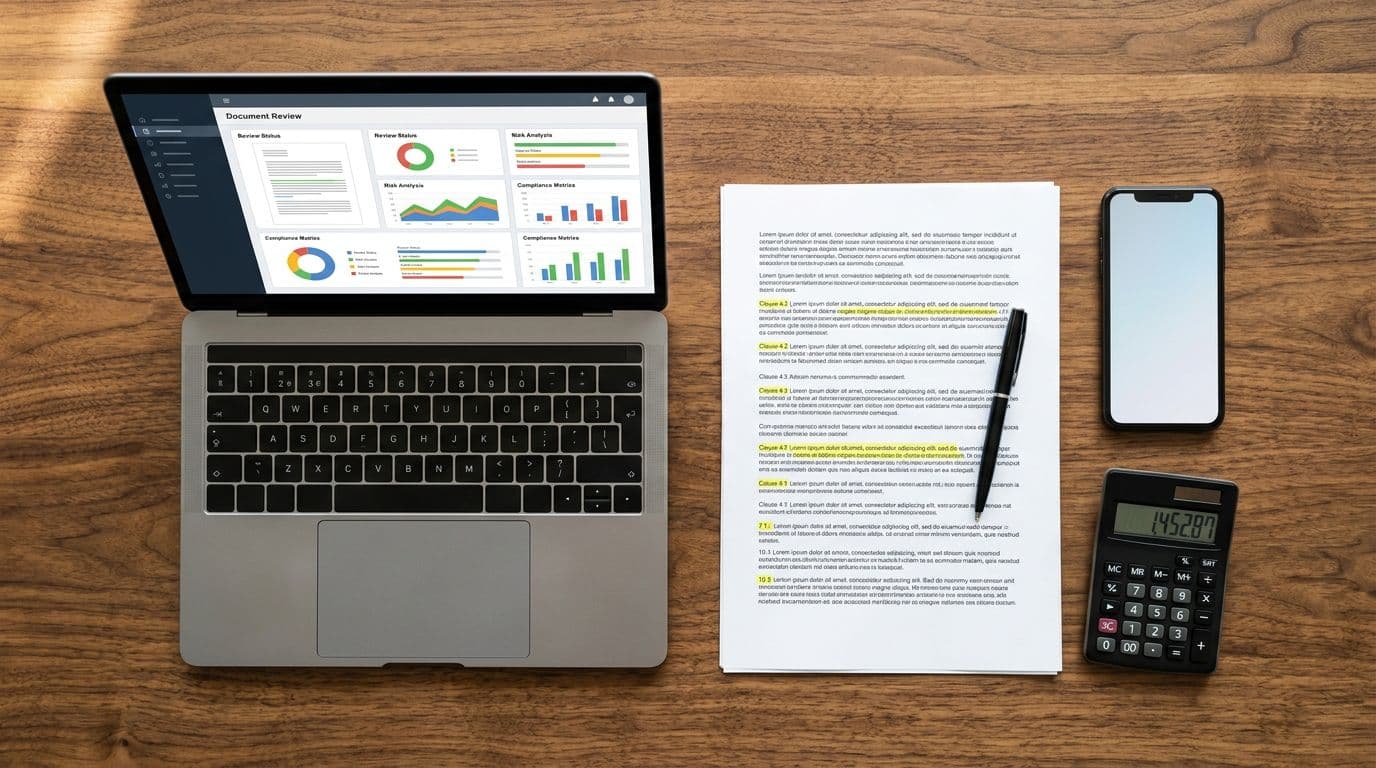

In March 2026, I see more small US businesses using AI contract review tools as a first-pass filter. Not to replace counsel, but to stop obvious landmines before a deal gets momentum. The best tools help you read faster, ask better questions, and keep your fallback terms consistent.

This buyer guide is how I’d choose a tool for a small team that signs vendor MSAs, SaaS subscriptions, SOWs, NDAs, and the occasional customer contract.

What I expect from AI contract review tools in 2026 (small business edition)

For small teams, the “best” tool is the one that matches your contract reality. That usually means: low volume, high variety, and not much time to tune complex systems.

Here’s what I look for first:

1) Fast intake for messy files. I want clean handling of PDFs, scanned docs, and Word files. If the tool fails on real-world formatting, it won’t get used.

2) Clause-level risk flags with plain explanations. A highlight is useless if it can’t tell me why it matters. I prefer tools that can explain the risk in business terms (cost, liability, lock-in) and point to the exact language.

3) A playbook that mirrors my fallback positions. Even for a small company, “market” terms aren’t enough. I want a way to encode basics like net-30, mutual NDA language, and caps tied to fees paid.

4) Redlines that don’t break negotiation flow. In practice, most negotiation still happens in Microsoft Word. If the tool can’t support that workflow, adoption drops fast.

5) Security and privacy controls that match customer expectations. At minimum, I want clear answers on data retention, model training, and access controls. If a vendor can’t explain these plainly, I treat that as a risk signal.

I don’t buy a contract AI for “smart summaries.” I buy it for repeatable issue-spotting and consistent fallback language.

The real risks these tools should catch (and the misses I still see)

Small US businesses tend to lose money in predictable places. The tool should be good at finding those, not just paraphrasing.

I put extra weight on whether the AI reliably surfaces:

- Auto-renewal and notice windows (silent renewals, short cancellation windows, written notice requirements)

- One-sided limitation of liability (tiny caps, caps that exclude key damages, caps that don’t cover confidentiality breaches)

- Indemnity traps (broad indemnities, IP indemnity that doesn’t flow both ways, defense-control language)

- Data terms (security obligations, breach notice timing, sub-processor language, audit rights)

- Payment and suspension (early payment penalties, unilateral price increases, service suspension triggers)

- Assignment and change of control (vendor can assign to anyone, you can’t)

At the same time, I plan for what AI still misses.

First, context and business intent often get lost. A clause that’s fine in a low-risk SaaS subscription can be unacceptable in a data-heavy integration. Second, negotiated documents contain “creative drafting.” Models can misread it, especially when definitions are buried.

So I build a workflow where the tool produces a short list of issues, then I verify the top risks myself and escalate only what matters.

Treat AI output like a junior reviewer: helpful at spotting patterns, unreliable at making final calls.

Tool categories and a quick comparison (what I’d pick for different teams)

When people say “AI contract review,” they often mean different product types. This is the mental model I use before I even look at pricing.

Here’s a quick way to compare the common approaches:

| Tool type | Best fit in a small business | Strengths | Watch-outs |

|---|---|---|---|

| Upload-and-analyze (PDF/Doc) | Owner-led review of vendor contracts | Quick risk flags, summaries, key term extraction | Can over-summarize, sometimes weak on negotiated language |

| Word-first redlining | Sales, ops, or legal reviewing in Word | Faster negotiation cycles, comments and redlines in place | Setup quality matters, requires consistent playbook inputs |

| Playbook and deviation tracking | Teams with standard terms and repeat deals | Enforces your fallback positions, highlights deviations | Needs clean templates and governance, false alarms if playbook is vague |

| Portfolio risk view | Companies managing many vendor contracts | Finds renewal risk and outliers across a set | Overkill for low contract volume |

In the 2026 market, tools often blend these categories. For example, some small teams gravitate toward lightweight reviewers (like goHeather or Legly) for quick clause flagging, while others prefer negotiation-oriented systems (like DocJuris) when multiple stakeholders comment during live deals. I treat brand names as secondary. Workflow fit comes first.

If Word is central for you, it’s worth reading how some vendors frame Word-based review and drafting workflows. Definely, for example, emphasizes Word-native review for legal teams in its perspective on AI contract review software workflows. I don’t take vendor blogs as neutral research, but they can help you clarify what “good” looks like in practice.

Buying checklist and a 2-week pilot plan I’d actually run

I avoid big rollouts. Instead, I run a short pilot that forces the tool to prove value on real contracts, with real deadlines.

Buying checklist (keep it simple):

- Your top 10 clauses: Can it find and explain them reliably (renewal, liability cap, indemnity, data terms)?

- Editable playbooks: Can I set fallback language and acceptable ranges?

- Word compatibility: Can my team keep working the way they already negotiate?

- Auditability: Can I export issues, notes, and a review record for file hygiene?

- Data handling: Is the vendor clear about retention, training, and access control?

- Pricing sanity: Does it price per user, per document, or per workspace, and does that match my volume?

A practical 14-day pilot: Day 1 to 2, I upload five contracts we’ve already signed (the messy ones). Day 3 to 7, I run the tool on active deals and compare its flags to what my team would’ve caught. Then, in week two, I tune a mini-playbook and see if false positives go down without losing real issues.

If the tool doesn’t save time by day 10, I stop.

FAQ: AI contract review for US small businesses

Are AI contract review tools a substitute for a lawyer?

No. I use them to spot issues early and reduce avoidable back-and-forth. High-risk clauses still need counsel.

What contracts are the best fit for AI review?

Vendor MSAs, SaaS agreements, SOWs, NDAs, and basic customer terms work well. Highly negotiated, one-off deals need more human review.

Can I use these tools for regulated data (health, finance)?

Sometimes, but I require clear security answers first (retention, access controls, and whether content trains models). If those answers are vague, I don’t upload sensitive data.

Do these tools work for state-specific contract rules?

They can help, but I don’t assume jurisdictional accuracy. I treat governing law and venue clauses as mandatory manual checks.

Where I’d start this week

I’d pick one workflow, not a feature set. If I live in Word, I’d pilot a Word-first tool. If I mostly sign vendor PDFs, I’d start with an upload-and-analyze tool. Either way, my goal is fewer missed clauses, not prettier summaries.

If you already know your top risks, you’re ready to test. If you don’t, that’s the first task.

Suggested related articles on AI Flow Review

- AI tools for legal document automation (practical workflows)

- AI compliance and security checks for SaaS tools

- AI productivity tools for small business operations teams