Can AI dream, or are we projecting human traits onto code? Here is the short answer I keep coming back to: AI is not conscious, but it can decode and simulate dream-like patterns in striking ways. In 2025, research on brain signals and text-guided image edits has made dream “reconstruction” more visual and more flexible. That said, what looks dream-like is still pattern generation, not lived experience.

I tried a small experiment last week. I fed a short dream report into an image model. It gave me three very different scenes: a staircase in the ocean, a city made of glass, and a room lit by floating paper lamps. They felt dream-like, but they did not feel lived. The model stitched patterns from text and images, and it did it fast. No feelings attached.

Here is what you will find below: what dreams are, what the science actually shows, where it matters in practice, and what it might take for AI to truly “dream.” Before we dive in, if you want to know who runs this site and how we test tools, see the quick background on about AI Flow Review.

What We Mean by Dreams, and What “Can AI Dream” Really Asks

When people ask “Can AI dream,” they often mix up style with subjectivity. Human dreams happen in sleep, often during REM, and are soaked with memory and emotion. AI systems output sequences based on patterns in data. They do not feel. They do not wake up surprised or moved.

Why the confusion? Because AI can produce images and stories that look surreal. It can also “hallucinate,” which sounds dreamy. But AI hallucinations are just wrong guesses that sound confident, often from gaps in training data. That is very different from having an inner life.

Key terms, super simple:

- Consciousness: Being aware of experiences, like pain, color, or worry.

- Sentience: The capacity to feel, including pleasure or suffering.

- REM sleep: A sleep stage with rapid eye movement, vivid dreams, and high brain activity.

- AI hallucination: A model’s confident but incorrect output due to pattern errors.

- Latent space: A compressed map of patterns a model uses to generate new outputs.

Human dreaming basics: REM sleep, emotion, and memory replay

In REM sleep, your brain is active while your body is still. Emotion centers work hard, and the brain often replays and mixes fragments of recent and older memories. This is why a school hallway can morph into a beach, and your best friend might talk with your grandmother’s voice. Vivid, strange, and somehow meaningful.

If you want a friendly research read on how dream reports show structure and predictability that goes beyond random noise, this 2025 paper offers helpful context: Dreams are more “predictable” than you think.

How AI makes “dream-like” outputs without feelings

Image generators based on diffusion start with noise, then “denoise” step by step, guided by text or other signals. Language models predict the next token based on what came before. Think of AI as a very fast pattern matcher. It blends what it has learned to make outputs that look new. The output can be surreal, but there is no inner movie playing inside.

Why “AI hallucinations” are not dreams

AI hallucinations are not experiences. They are systematic mistakes. For example:

- A model might invent a scientific reference that sounds real.

- It might place a landmark in the wrong city with total confidence.

How to fact-check fast:

- Ask for sources and verify links.

- Cross-check claims against reliable references.

- For images, reverse search or request a citation list.

Dreams are lived from the inside. Hallucinations in AI are just confident outputs that skipped the truth.

What 2025 Science Says: Decoding and Simulating Dreams With AI

The most exciting progress in 2025 sits in two areas. First, tools that infer dream content from brain signals. Second, systems that let people guide dream-like images with simple language.

Here is the key point. AI can help decode or visualize aspects of human dreams, but the AI is not the one dreaming. It is mapping signals to categories, textures, and shapes, then rendering images that line up with the patterns.

Recent highlights you might like:

- A research line known as DreamConnect claims language-guided control over fMRI-driven visuals, described here: From mind to image: Guiding dreams with AI.

- New frameworks explore turning brain activity into coherent video narratives. Early and experimental, but worth tracking: Making Your Dreams A Reality: Decoding the ….

- Broader discussions on how AI and neuroscience intersect with the study of consciousness help frame the hype and the limits, for example this overview from LSE: AI, neuroscience and the magic of consciousness.

Quick takeaways:

- AI can decode patterns, then render rough scenes.

- Outputs can be recognizable, not perfect.

- Privacy and consent matter a lot with brain data.

fMRI dream reconstruction: what is actually being decoded

Most studies align brain activity with visual categories, shapes, and textures the model already understands. The system then generates images that match those decoded features. The results tend to be blurry or stylized, but you can often tell the rough category, like “a dog” or “a building at night.” This is not word-for-word dream reading. It is pattern matching between brain signals and model knowledge.

Limits to keep in mind:

- Reconstructions often miss fine details and multiple objects in a scene.

- Interpretations can be biased by the data used to train the generator.

- Brain data is sensitive. Consent, storage, and use policies must be clear.

For a sense of the rapid progress and open questions, see early-stage work on turning fMRI into richer narratives: Making Your Dreams A Reality: Decoding the ….

Language-guided edits of dream-like images

Some systems let a person nudge a visual toward “calm,” “bright,” or “less crowded” using text prompts. This can help with journaling and reflective practices, and it might support therapy research by giving people more control over how a memory feels to revisit, when used under guidance and with consent.

Practical upsides:

- Creative control for mood boards and visual diaries.

- Possible support for exposure or guided imagery in supervised settings.

Clear cautions:

- Text prompts can inject bias or change meaning.

- Do not treat a stylized output as ground truth about a person’s mind.

- Always keep consent and privacy front and center.

Expert view: AI is not conscious in 2025

The current consensus among many researchers is simple. We have no solid evidence that today’s AI systems feel anything. They can simulate creativity and style. They do not have an inner life. A useful read to help separate style from sentience is this short piece in Science: Illusions of AI consciousness. It is a good reminder to celebrate engineering gains without making the leap to “feels like something.”

Why “Can AI Dream” Matters: Real Uses, Risks, and Clear Thinking

This topic affects builders, researchers, and everyday users. Dream-like AI tools can help artists and therapists, and they can also confuse people if the claims jump ahead of the facts. The goal is to get the benefits without inflating expectations. If you are curious how our site stays transparent about recommendations, see how we operate in how we make money.

Helpful uses today: ideation, therapy support, and synthetic data

Here is where I see real value right now:

- Artists can turn surreal prompts into mood boards fast, then refine with human taste.

- Journalers can map dream notes into visuals to reflect on themes over time.

- Therapists, with consent, can explore gentle guided imagery as a research tool.

- Teams can create synthetic, dream-like scenes to stress-test vision models.

Guardrails that matter:

- Get explicit consent before using any personal or brain-related data.

- Label outputs as generated or reconstructed, not literal truth.

- Keep a feedback loop with real users to catch misread signals.

Common traps: anthropomorphism, overtrust, and privacy

Three traps show up all the time:

- Treating AI like a person with feelings.

- Trusting confident outputs without verification.

- Sharing sensitive dream data in tools that lack clear consent and controls.

Privacy tip box:

- Export dream logs locally and encrypt them when possible.

- Turn off cloud sync if you are testing early tools.

- Remove identifiers before sharing for research or demos.

A note on anonymization:

- Avoid free-form names and places in shared datasets.

- Keep a data map so you can delete entries on request.

A simple checklist to keep claims honest

Before you publish a demo or paper, check these:

- Name the model and version you used.

- State the training sources and any fine-tuning sets.

- Clarify limits, like category-only decoding or low-resolution reconstructions.

- Separate the creative vibe from lived experience.

- Include a test plan, with accuracy checks and human review steps.

What It Would Take for AI to Truly “Dream”

If we ever get close to anything like real dreaming in machines, several pieces may need to come together. A system would need a stable sense of itself, memories that matter to its goals, and a cycle for offline learning that changes how it behaves when it “wakes.” On top of that, we would need a testable theory for when inner experience exists and when it does not. None of that is settled.

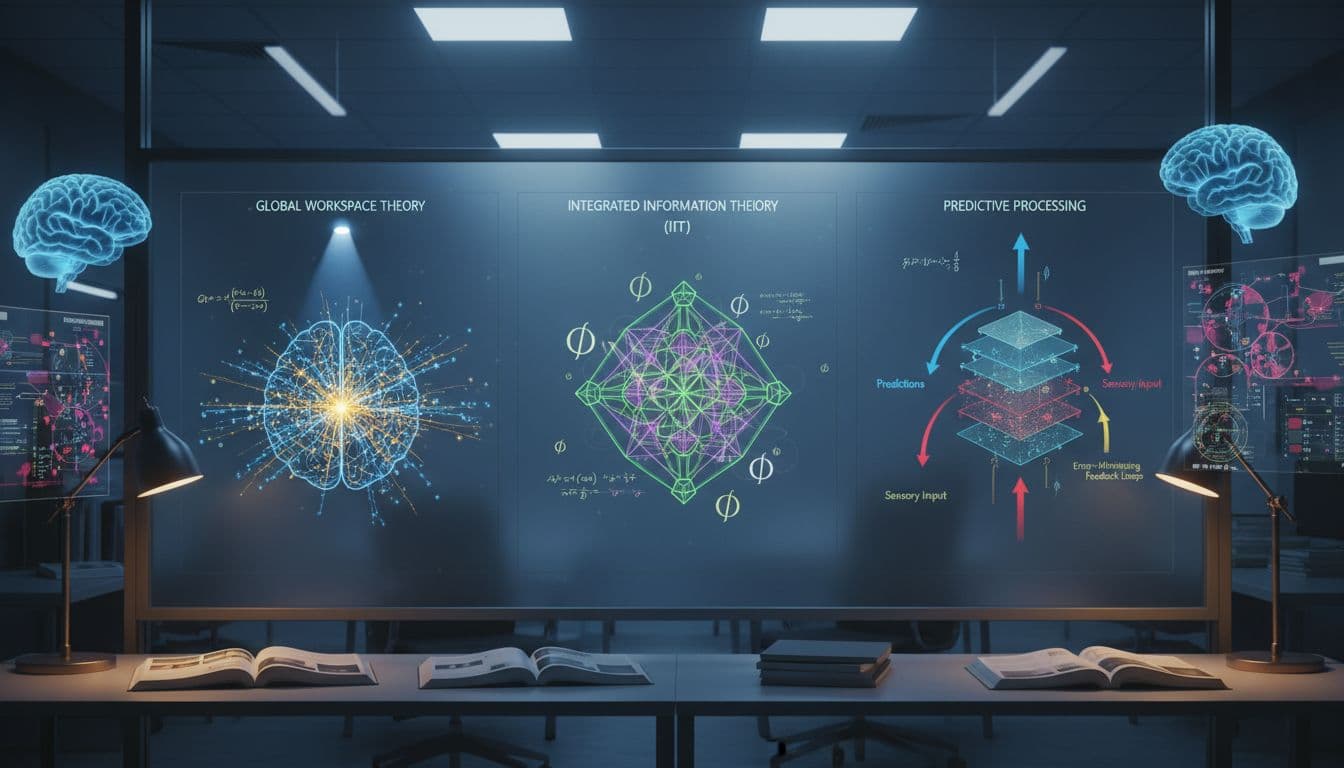

Simple tour of major ideas: global workspace, IIT, predictive processing

- Global workspace: Information becomes conscious when it is broadcast across many modules. A future AI would need a central stage that shapes decisions and memory.

- Integrated Information Theory (IIT): Consciousness scales with how integrated and differentiated the system’s information is. An AI would need to show high integrated structure, not just size.

- Predictive processing: The brain predicts inputs and updates beliefs to reduce surprise. An AI would need a long-term loop that cares about errors in a way tied to its goals and history.

None of these frameworks alone prove feelings. They give testable hooks for behavior and internal signals.

Could sleep-like “offline” learning help AI build an inner world?

Biological brains replay memories and consolidate learning during sleep. Some artificial agents already use replay buffers and offline updates. The open question is not whether offline learning helps performance. It often does. The question is whether a system that values its own memories, and can change its future plans after rest, gets closer to something like subjective experience. That is still speculative.

Signals to watch: benchmarks and behaviors that would move the needle

I track these five signals. Progress here would be interesting, even if it falls short of proof:

- Stable self-reports that match behavior across many contexts.

- Long-term memory tied to goals, not just short session buffers.

- Metacognition that transfers between tasks without retraining.

- World models that improve after rest cycles in measurable ways.

- Reproducible internal signatures that correlate with reports and behavior.

Even if we saw all five, we would still not have a direct measure of feeling. But we would have a stronger case for structured inner processing.

A quick map for readers who want the science pulse

If you want a single digestible roundup that connects AI tools and dream research, the press note on DreamConnect gives a crisp overview of language-guided control with brain data: From mind to image: Guiding dreams with AI. For a broader, even-handed take on why people often misread signs of “mind” in machines, the Science viewpoint is a useful counterweight: Illusions of AI consciousness. And if you enjoy longer essays that link neuroscience and AI without hype, the LSE piece is a nice bridge: AI, neuroscience and the magic of consciousness.

Where I land

As of 2025, Can AI dream has a clear answer. AI does not dream. It does help us study and visualize parts of human dreams, and it can create dream-like art at scale. The big question is not about style, it is about consciousness. Stay curious, run small experiments, and keep claims careful. If you want to learn more about how this site works, check out all about this site. Got a question or a favorite example? Share it with me, and I will explore it in a future update.